Ddseddse

Iconic Member

- Messages

- 1,557

TLDW (Too long; didn't watch): "Please, DARPA, shower me with cash!"

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Biggest bottle neck is electricity followed by electrical equipment like transformers, then cooling equipment then technicians to install it.One interesting thing to follow is AI data centers. Looks like they are running into supply chain constraints as well as local pushback (NIMBY). Municipalities like the prop tax revenue but they don’t provide any other benefit and lots of drawbacks.

I work in commercial lending, although not specifically with data centers. That said, it’s a hot button topic because of the exposure lenders (including private) have to data centers. I think a lot of lenders are capping exposure at current levels until the path forward is clearer. All that to say, it’s not quite as simple as many believe “if you build it, AI will come.”Biggest bottle neck is electricity followed by electrical equipment like transformers, then cooling equipment then technicians to install it.

And providers are hesitant to create new supply (especially electricity providers) as they are fearful that the whole thing will crash leaving them with excess capacity and lots of loans to payoff.

There are a lot of short term benefits like property taxes and the economic activity from new construction. But once the data center is built, they don't need too many people to keep it running.

This may be a dumb question but I believe it is your field. How is the energy required by prompts/agents/etc allocated? Is the company that “employs” the agents charged for the energy required to power the agents?Biggest bottle neck is electricity followed by electrical equipment like transformers, then cooling equipment then technicians to install it.

And providers are hesitant to create new supply (especially electricity providers) as they are fearful that the whole thing will crash leaving them with excess capacity and lots of loans to payoff.

There are a lot of short term benefits like property taxes and the economic activity from new construction. But once the data center is built, they don't need too many people to keep it running.

That's a little different part of the value chain then I typically work at but this is my guess. Sometimes there is a company that owns the data center and they would rent space inside the data center. Then the AI companies like openai and Anthropic would rent that space, put in their own servers and other equipment and pay the electricity charges either to the data center operator who would pay the utility or to the electric utility directly.This may be a dumb question but I believe it is your field. How is the energy required by prompts/agents/etc allocated? Is the company that “employs” the agents charged for the energy required to power the agents?

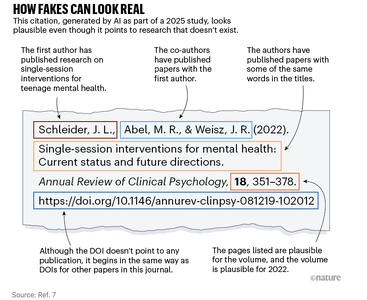

This diagram is a LITERAL a description of the one and ONLY thing a LLM (Large Language Model) is capable of doing.Hallucinated citations are polluting the scientific literature. What can be done? - Tens of thousands of publications from 2025 might include invalid references generated by AI, a Nature analysis suggests.

So while journalism is dying a slow death, climate stability is dying a slow death, democracy is being tested, science is also morphing into pseudoscience? Awesome, happy Monday.

No offense, but this is not an accurate picture of what LLMs do or how they work. It is vastly misleading. The whole "it's predicting the next word" line doesn't really mean what it's often interpreted to mean.To whit, produce a statistically probable amalgam of stuff that it was fed in it's training dataset.

If you are expecting an LLM to do something different, the problem is not with the LLM, it's with your lack of understanding of what an LLM is capable of doing.